The act of gathering information through one or more sources and combining it into a summarized version is known as data aggregation.

To put it another way, data aggregation entails obtaining individual data from various sources and organizing it into a more straightforward format, like sums or practical metrics.

You can combine non-numeric data even though data is typically aggregated using the count, sum, and mean operators.

What Is Data Aggregation?

Data aggregation is the process of gathering information from various databases, spreadsheets, and websites and condensing it into a singular report, dataset, or view. Data aggregators handle this procedure.

An aggregation tool, in more detail, takes heterogeneous information as input

Afterward, it expands on it to create aggregated outcomes. Finally, it provides the features to present and examine the resulting gathered information.

Because it enables enormous amounts of information to be quickly and easily examined, aggregating data is especially helpful for data analysis.

This is so that thousands and thousands, thousands, or perhaps even millions of individual data entries can be compacted into a single row of aggregated data.

Let’s now examine data aggregation in more detail.

Data Aggregation Use Cases

Aggregated data can be effectively used in a variety of industries, including:

1. Finance: To determine a customer’s creditworthiness, financial organizations compile information from various sources. They use it, for instance, to determine whether or not to award a loan.

Additionally, aggregated data can be used for market analysis and identification.

2. Healthcare: Medical facilities create treatment choices and enhance coordinated care using data compiled from health records, diagnostic tests, and lab results.

3. Marketing: Companies compile information from their websites and social media accounts to track mentions, hashtags, and interactions.

This is the way you can determine whether a marketing strategy was successful. Additionally, aggregated customer and sales data is used to make business choices for future marketing campaigns.

4. Application Monitoring: To track application functionality, find new bugs, and resolve problems, software routinely gathers and aggregates data from the application and the network.

5 . Big Data: By combining data, it is simpler to analyze the information that is readily accessible on a global scale and to keep it in a database system for later use.

Issues with Data Aggregation

While data aggregation has many benefits, there are some drawbacks as well. Now let’s evaluate the three most significant difficulties.

1. Integrating Various Data Sources

Statistics are typically collected from a variety of sources. Therefore, it is likely that the input data have quite diverse formats.

In this instance, the data must first be processed, normalized, and transformed by the data aggregator before being combined.

Particularly when dealing with Big Data or extremely complex datasets, this job may turn extremely time-consuming and complex.

It is advised to decode the information before aggregating it for this purpose. Data parsing is the process of converting original data into a more useful form.

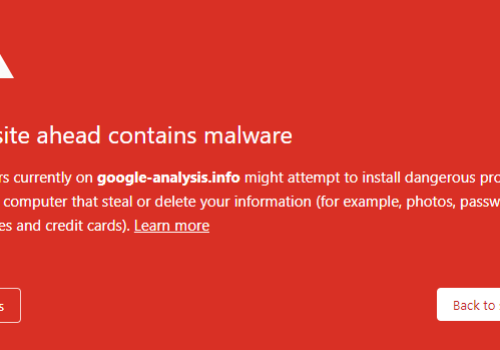

2. Ensuring Compliance with Laws, Regulations, and Protection

Privacy must constantly be taken into account when working with data. This is particularly accurate when discussing aggregation.

The rationale behind this is that you might need to use personally identifiable information (PII) to create a synopsis that accurately represents a group as a whole.

For instance, this is what takes place when releasing the public survey or election results.

As a consequence, data anonymization and data aggregation are frequently used together. Lawsuits and fines may result from violating privacy laws.

Ignoring the General Data Protection Regulation (GDPR), which protects the privacy of EU resident’s personal information, could cost you more than $20 million.

You have little to no option, despite the fact that protecting sensitive data in aggregation is a significant challenge.

3. Creating Good Outcomes

The quality of the source data affects how reliable the outcomes of a data aggregation procedure are. As an outcome, you must first confirm that the data you have gathered is genuine, comprehensive, and relevant.

This is not simple, as you might think. For instance, consider making sure the data selected are a decent sample of the population being studied. That is unquestionably a difficult task.

Additionally, also take into consideration that aggregation results vary depending on granularity. For those of you who are unfamiliar, granularity dictates how the information will be organized and summarized.

When the detail is too high, the meaning is lost. You cannot see the broad picture if the detail is too small. The precision to use therefore relies on the outcomes you are trying to achieve.

It might take a few tries to find the precision that best suits your objectives.

4. Data Aggregation With the help of Bright Data

As we previously discovered, a data aggregation method begins with the retrieval of data from various sources. A data aggregator could therefore access data that has already been gathered or can get it immediately.

The findings of the aggregation will rely on the accuracy of the data, which is something that must always be kept in mind. As a result, aggregating data is crucial to compilation.

Thankfully, Bright Data offers specific solutions for each stage of information collection. Bright Data specifically provides a full Web Scraper Interface.

You can retrieve a lot more data from the internet using such a tool while escaping all the difficulties associated with web scraping.

The Web Scraper IDE from Bright Data can be used to collect information as the very first step in an aggregation procedure. Additionally, organized and ready-to-use databases are provided by Bright Data.

Purchasing them will allow you to immediately bypass all data collection stages, greatly simplifying the aggregation process.

Then, you could indeed apply these databases in a variety of situations. To provide their website data, the majority of hospitality brands depend on Bright Data’s efficiency in travel data aggregation.

They can compare the prices with rivals, track how customers look for and book trips, and forecast upcoming patterns in the travel industry thanks to this aggregated data.

This is only one of the numerous areas where Bright Data’s capabilities, know-how, and statistics can be useful.

Quick Links:

- Why Is Data Ethics Important In Marketing?

- How Many Data Breaches

- What Is The Cause Of The Marketing Industry

- Bright Data Pricing Plans

Conclusion: Data Aggregation 2024

You can maximize the value of your data through data aggregation. You can quickly identify insights and patterns by combining your data in summaries and views.

Additionally, you can support your business choice with aggregated data. This can only be feasible if the aggregated results are trustworthy, which relies on the caliber of the data sources.

That’s why you should concentrate on data gathering, and an application like Bright Data’s web scraping tool provides all of the tools required to retrieve the data you require.

Otherwise, you can immediately purchase one of the many top-notch datasets that Bright Data has to offer.